In the previous post, I noted that a chemistry publisher is about to repeat an earlier experiment in serving pre-prints of journal articles. It would be fair to suggest that following the first great period of journal innovation, the boom in rapid publication “camera-ready” articles in the 1960s, the next period of rapid innovation started around 1994 driven by the uptake of the World-Wide-Web. The CLIC project[1] aimed to embed additional data-based components into the online presentation of the journal Chem Communications, taking the form of pop-up interactive 3D molecular models and spectra. The Internet Journal of Chemistry was designed from scratch to take advantage of this new medium.[2] Here I take a look at one recent experiment in innovation which incorporates “augmented reality”.[3]

The title is interesting: “Combination of Enabling Technologies to Improve and Describe the Stereoselectivity of Wolff–Staudinger Cascade Reaction“. One of these technologies relates to “microwave-assisted flow generation of primary ketenes by thermal decomposition of α-diazoketones at high temperature”, but the journal presentation itself attempts the “faster interpretation of computed data via a new web-based molecular viewer, which takes advantage from Augmented Reality (AR) technology“. To access this component directly, go to the link https://leyscigateway.ch.cam.ac.uk/staudinger/ It is not incorporated into the journal infrastructures as the CLIC project attempted, but is perhaps closer to the model I noted in the previous post of supporting (FAIR) data associated with the article and hosted separately from the journal.

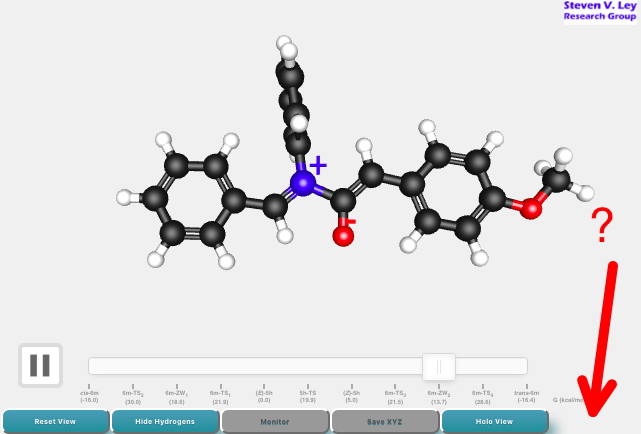

What happens next depends rather on the Web browser you are using. With many browsers and tablets, a conventional 3D molecular presentation appears; there is no button present where the red arrow points. You will find out this is because “Augmented Reality is not available in your browser, as the getUserMedia() API is not supported“

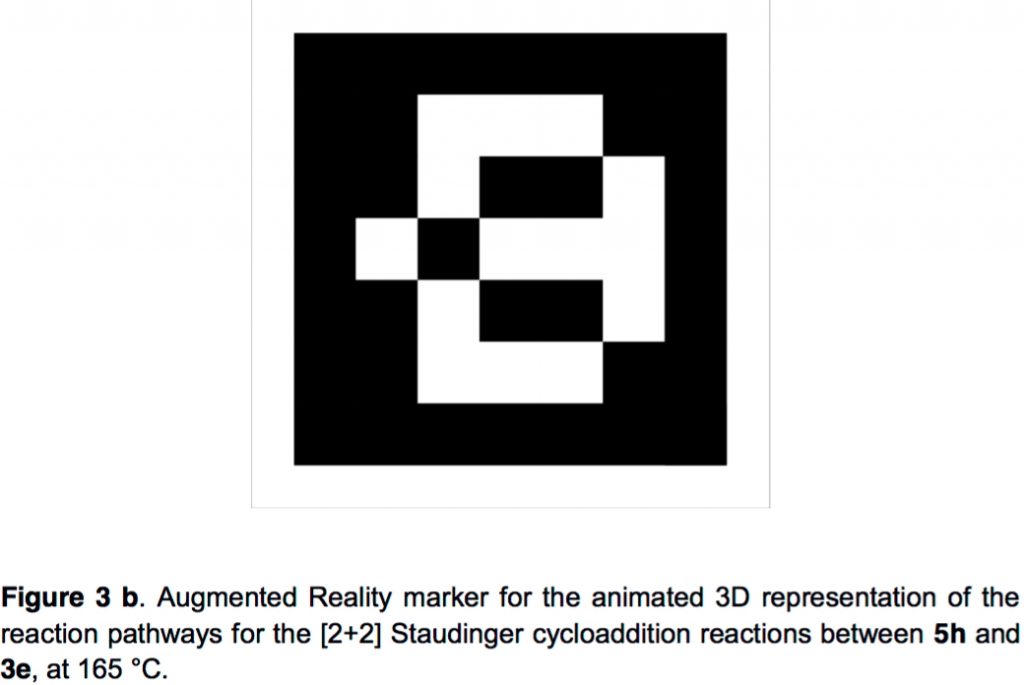

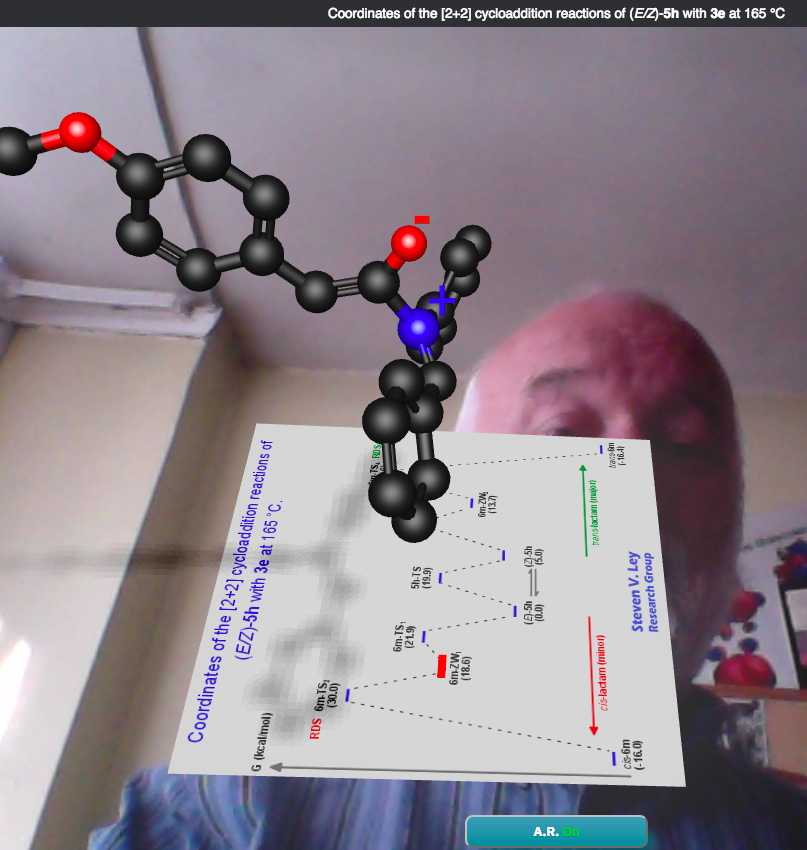

Some browsers (the latest Opera, FireFox, Chrome) do support this feature, and a new AR button appears. Selecting this now layers the video from the device camera onto the 3D molecular model; the molecule now floats in the scene captured by the camera (which in the case below is the room I am sitting in). After a few seconds you are urged to “point the camera towards the AR marker”. The supporting information contains such AR markers as a navigation aid for the 3D coordinates contained there. An example is:

If this marker is now brought into the camera view (by printing it, sic) and holding it in front of the camera image, the marker resolves into further data relevant to the molecule of interest, layered into the existing scene of the room and the molecule. For the marker above, it resolves to a reaction energy profile which reveals where the specific molecule sits energetically in terms of the overall reaction.

This layering of “heads up” molecular data into a scene comprising a 3D molecular model and the human viewer of that molecule captured in video is what defines the concept of “augmented reality” (the data being the augmentation, rather than the human).

Having now tried it out, I was left wondering whether this truly was a great advance in enabling technology for chemistry journals. The role of the camera seems primarily to capture the AR markers contained in the supporting information; the presence of the reader in the video image apparently inspecting the molecule could be regarded as a distraction. The AR markers (QR codes) are merely visual representations of a URL, which in the form of a DOI (as used in this blog) to locate data is rather more familiar to most readers. The DOI, by the way, carries further information in the form of metadata, and which when sent to e.g. DataCite, enables the data to be found. Does the data need to be layered onto the molecule (and apparently floating in front of the reader) to become usable? Could it instead be placed in a pop-up or separate window of its own (as the 1994 CLIC project achieved)? Do the AR markers enable the data to be FAIR? One can Find the data (albeit only by reading and printing the supporting information) and view it in the AR scene, but is it Accessible (can one access the underlying numerical data?) or Interoperable (place it into another program) or Re-usable?

As with all enabling technologies, one has to always ask if that technology helps or hinders. Or is the principle of KISS (keep it simple) sometimes better? It is however good to see research groups experimenting with these themes and meanwhile readers can judge for themselves whether “heads up” AR augmentation of the data describing research is indeed the next big thing.

References

- D. James, B.J. Whitaker, C. Hildyard, H.S. Rzepa, O. Casher, J.M. Goodman, D. Riddick, and P. Murray‐Rust, "The case for content integrity in electronic chemistry journals: The CLIC project", New Review of Information Networking, vol. 1, pp. 61-69, 1995. https://doi.org/10.1080/13614579509516846

- S.M. Bachrach, and S.R. Heller, "The<i>Internet Journal of Chemistry:</i>A Case Study of an Electronic Chemistry Journal", Serials Review, vol. 26, pp. 3-14, 2000. https://doi.org/10.1080/00987913.2000.10764578

- S. Ley, B. Musio, F. Mariani, E. Śliwiński, M. Kabeshov, and H. Odajima, "Combination of Enabling Technologies to Improve and Describe the Stereoselectivity of Wolff–Staudinger Cascade Reaction", Synthesis, vol. 48, pp. 3515-3526, 2016. https://doi.org/10.1055/s-0035-1562579

Tags: Academia, Academic publishing, Boom, Design, Design Services, Innovation, Internet Journal, online presentation, Preprint, Publishing, reaction energy profile, technology helps, Web browser, web-based molecular viewer

I attended a presentation by Google yesterday, one of many companies apparently betting on one of the new “realities” (their focus is education). The list of these new “realities” is getting longer by the day:

1. Virtual reality (a scene, in 2D or 3D, composited entirely by computer)

2. Augmented reality (computer composited information layered on to an image of the real world as recorded using a camera)

3. Mixed reality, in which a computer composited scenes are integrated into a real-world camera scene, in a manner such that you cannot see the join, technically at least.

This last (MR) can be seen in this YouTube video: https://www.youtube.com/watch?v=kw0-JRa9n94

Some of the (hyped?) claims being made for MR is that the concept of a “screen” (computer, laptop, tablet, phone, watch) will be replaced by a MR presentation to your eyes (via wearable glasses, or even contact lenses!) in which the “screen” (where you can input snd receive information) will be just another component, much like virtual keyboards are now accepted on tablets or phones.

If even a fraction of this proves not to be mere hype, then one might imagine that the way in which content (i.e. journals, lecture notes, etc) will be delivered in science and chemistry in particular is set to change. But of course I am only too aware that paper (and nowadays even Powerpoint) has a pretty low failure rate. If one’s MR scene has failed, can you imagine how it might be to get it fixed, with an audience of 100+ people in front of you!